Smart manufacturing gains often stall after pilot projects

Many smart manufacturing initiatives deliver impressive pilot results, yet struggle to scale across plants, teams, and legacy systems. For technical evaluators, the real challenge is not proving isolated performance, but validating interoperability, ROI, safety, and long-term process stability. This article explores why momentum often slows after pilots and what decision-makers should assess to turn early success into sustainable industrial impact.

What smart manufacturing means beyond the pilot stage

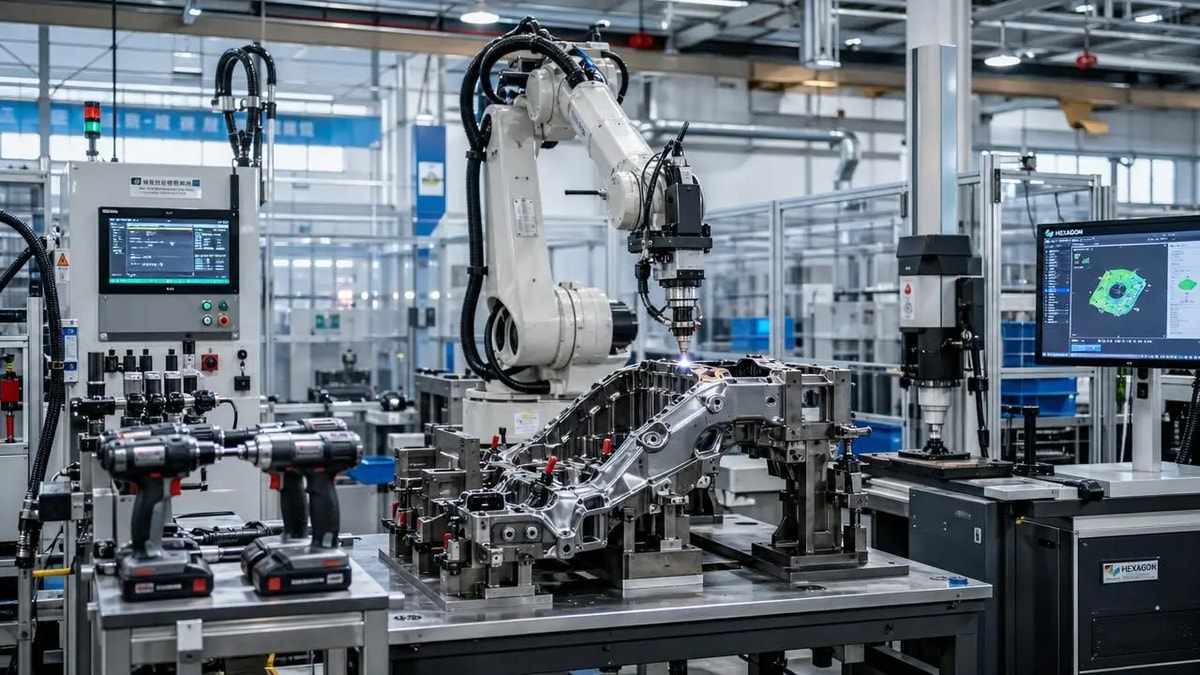

In practice, smart manufacturing is not a single machine upgrade, a dashboard, or a successful automation demo. It is the coordinated use of connected equipment, process data, quality intelligence, industrial software, and human decision support to improve production performance at scale. A pilot usually proves that one cell, line, or site can gain visibility or efficiency. However, enterprise value only emerges when the same logic works across multiple products, shifts, plants, suppliers, and maintenance cycles.

This is why the discussion has shifted from “Can the technology work?” to “Can the operating model absorb it?” Technical evaluators increasingly need to judge architecture maturity, integration burden, cybersecurity resilience, metrology reliability, and operator adoption. In sectors that rely on assembly, welding, inspection, torque control, and precision measurement, the last mile of deployment often determines whether smart manufacturing becomes a scalable capability or remains a local success story.

Why the industry keeps focusing on stalled momentum

The reason smart manufacturing pilots attract attention is simple: they often deliver visible gains quickly. A connected torque system may reduce rework. A vision-enabled inspection station may detect defects earlier. A welding analytics tool may improve traceability and safety compliance. These outcomes create internal confidence. Yet once leaders attempt plant-wide or cross-site replication, hidden complexity appears.

Industrial environments are rarely uniform. Equipment age varies, protocols differ, part families change, and quality standards are interpreted through local habits. Even when capital is available, scaling requires consistent data definitions, maintainable interfaces, training models, and process governance. GPTWM has long observed that the most persistent barriers are not purely digital. They sit at the intersection of tooling, metrology, maintenance, ergonomics, safety, and economics. That is where many smart manufacturing programs slow down after early wins.

Where pilot projects usually succeed

A pilot is designed to control variables. It often uses a limited product mix, a motivated team, a concentrated engineering effort, and selected equipment that is easier to instrument. In this environment, smart manufacturing can generate measurable improvements in cycle time, defect escape rate, energy usage, consumable control, or operator guidance. The pilot’s strength is focus.

This focus is valuable. It validates technical feasibility, reveals baseline data quality, and helps teams test whether process intelligence actually influences operator behavior. For technical evaluators, a good pilot should also expose edge cases rather than hide them. If the trial only works under ideal conditions, it may not provide meaningful evidence for broader industrial deployment.

Why smart manufacturing gains often stall after pilots

The most common reason is that the pilot validates a point solution, while scaling demands a system solution. A local analytics tool may perform well, but enterprise rollout depends on whether MES, ERP, CMMS, PLCs, quality systems, and metrology records can exchange trusted data. If integration costs rise faster than expected, momentum fades.

A second reason is process variability. Smart manufacturing performs best when workflows are stable enough to model and repeat. In real factories, however, fixture wear, consumable inconsistency, part tolerance drift, operator technique, and maintenance timing all affect output. If the digital layer does not account for these realities, reported gains become difficult to reproduce.

Third, ownership is often unclear. Pilot teams are usually cross-functional and highly engaged. After transition, responsibility may fragment across IT, operations, engineering, quality, and plant leadership. Without clear governance, no group fully owns data integrity, update cycles, alarm logic, or change control. Smart manufacturing then becomes another tool that people use inconsistently.

Fourth, ROI models are sometimes too narrow. Early business cases may focus on labor or cycle time, while ignoring software maintenance, calibration discipline, cybersecurity hardening, retraining, spare parts strategy, and integration support. As total cost becomes clearer, confidence weakens unless the organization can quantify quality gains, traceability value, compliance reduction, and downtime avoidance.

Finally, safety and trust matter. In welding, assembly, and precision inspection environments, operators and supervisors need to believe that the system is accurate, usable, and aligned with real production pressure. If recommendations create friction, alarms are noisy, or measurements conflict with known shop-floor conditions, adoption drops quickly even when the technology is technically sound.

A practical overview of scaling barriers

For technical evaluators, it helps to categorize risk early. The table below summarizes the most typical reasons smart manufacturing programs slow down after pilot success.

Where technical evaluators create the most value

Technical evaluators sit in a critical position because they connect engineering evidence to operational reality. Their role is not simply to confirm that smart manufacturing technology functions. It is to determine whether the solution can remain accurate, supportable, and economically meaningful under changing production conditions.

This requires deeper questions. How sensitive is system performance to part variation or tool wear? What happens when sensors drift or a station is bypassed? Can the platform support traceability at the granularity required for audits or warranty analysis? Is the architecture dependent on one integrator or one software specialist? In precision-driven environments, especially those involving assembly torque, weld quality, and metrology, these questions often matter more than the headline pilot KPI.

Application value across common industrial scenarios

Although smart manufacturing is discussed broadly, its practical value becomes clearer when linked to recurring industrial scenarios. The following classification reflects where scaling can either succeed or break down.

Why data quality and metrology discipline are central

Many organizations describe smart manufacturing as a data problem, but it is more accurate to call it a decision-quality problem. If signals are noisy, measurements are not traceable, or event labels vary by site, analytics may look sophisticated while guiding weak decisions. For technical evaluators, this means data quality should be reviewed alongside physical measurement discipline.

In manufacturing environments influenced by tool wear, thermal drift, vibration, and human handling, stable measurement systems are essential. A plant cannot scale closed-loop optimization if the underlying dimensional or process data is not comparable. GPTWM’s focus on precision tools, metal joining, and metrology reflects this reality: digital intelligence only becomes actionable when the physical world is measured consistently and interpreted in context.

Practical guidance for evaluating scale readiness

A stronger approach is to evaluate smart manufacturing in stages. First, confirm whether the pilot outcome depends on unusual engineering support or ideal operator behavior. Second, map the interfaces required for the next two deployment steps, not just the first one. Third, test whether alarms, dashboards, and recommendations remain useful under production stress, including changeovers, maintenance events, and variant shifts.

It is also wise to examine supportability. Can plant teams troubleshoot basic failures without waiting for external specialists? Are software updates governed? Is there a documented calibration and validation routine for sensors, torque systems, or inspection devices? Smart manufacturing scales faster when technical support models are as deliberate as the original automation design.

Another best practice is to define enterprise metrics before scaling. If one plant counts rework differently from another, leadership may misread performance and lose trust in the program. Common definitions for downtime, first-pass yield, parameter deviation, and safety events are not administrative details; they are prerequisites for meaningful comparison.

From isolated success to sustainable industrial impact

The future of smart manufacturing will not be determined by the number of pilots launched, but by the number of deployments that remain stable after novelty fades. That transition depends on architecture, measurement discipline, process realism, workforce adoption, and transparent economics. For technical evaluators, the best decisions come from treating pilot success as the beginning of scrutiny, not the end of it.

Organizations that turn early gains into long-term value usually do three things well: they design for interoperability, they protect data and metrology integrity, and they align digital tools with the practical constraints of production. In that sense, smart manufacturing is less about adding intelligence to machines than about building reliable intelligence into the operating system of the factory.

For teams assessing the next phase of deployment, the priority should be clear: validate not only whether the solution works, but whether it can keep working across time, variation, and scale. That is the threshold between a promising pilot and a durable smart manufacturing advantage.

Related News

Prof. Marcus Chen

Weekly Insights

Stay ahead with our curated technology reports delivered every Monday.