Where technology integration fails on the factory floor

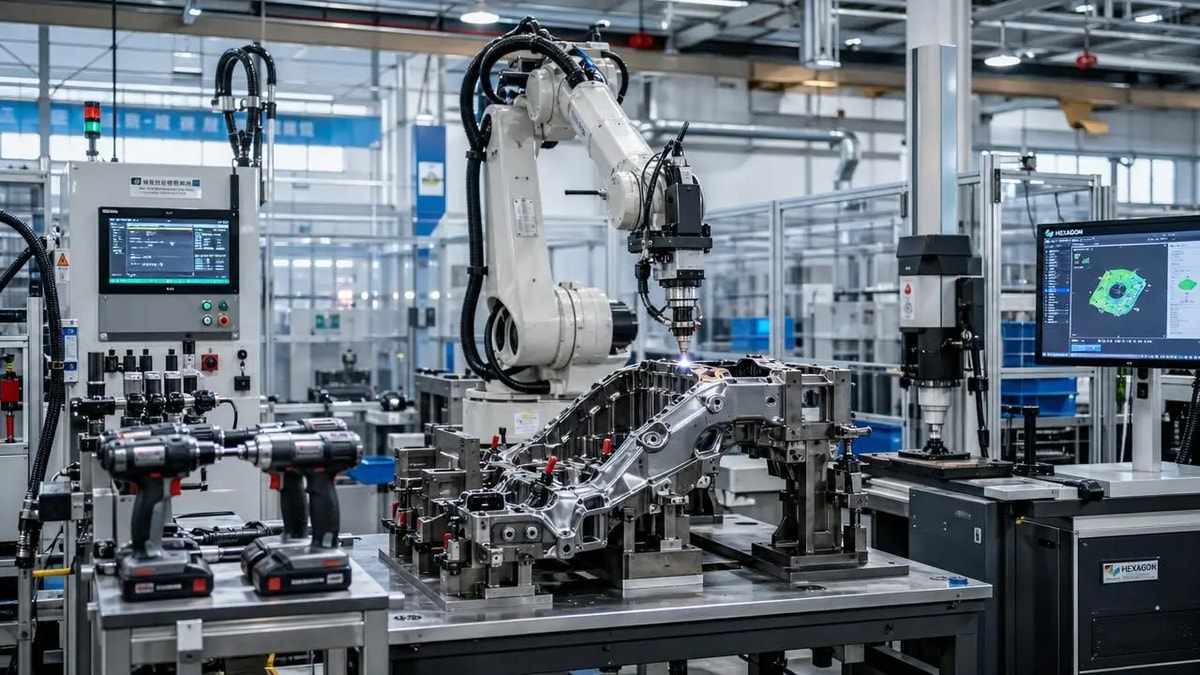

On the factory floor, technology integration often fails not because the tools are weak, but because execution, data flow, and operator realities are misaligned. For project managers and engineering leaders, the real challenge is turning digital investments into measurable uptime, quality, and coordination. This article explores where breakdowns begin and how smarter integration can close the gap between strategy and production.

Why does technology integration fail even after companies buy the right systems?

The short answer is that purchasing advanced tools is not the same as achieving effective technology integration. Many factories invest in MES platforms, sensor networks, torque monitoring, welding automation, traceability software, or digital metrology systems expecting immediate gains. Yet the factory floor operates through people, routines, maintenance windows, shift changes, legacy machines, and quality pressures. If the new layer of technology does not fit those realities, adoption stalls.

In many sectors, from automotive and aerospace to construction equipment, electronics, and general fabrication, failure starts with a strategic mismatch. Management may define integration as a software rollout, while operators experience it as extra screens, duplicate data entry, slower cycle time, or unclear alarms. Engineering may focus on system capability, but production supervisors care about throughput and recovery time. When these viewpoints are not aligned early, technology integration becomes a technical project without operational ownership.

Another common issue is fragmented implementation. A plant may connect a welding cell, an inspection station, and a power tool platform separately, but never establish one usable workflow. Data exists, but it does not move at the right speed or reach the right decision-maker. The result is a modernized patchwork rather than an integrated production environment.

Where do the first breakdowns usually appear on the factory floor?

The first breakdowns usually appear in the “last mile” of execution, where equipment interfaces with operators and process owners. This is especially visible in industrial assembly, metal joining, inspection, and maintenance workflows. A system may work in testing, but under production pressure the weak points become obvious.

One frequent point of failure is data capture. Sensors may record torque, heat input, dimensional deviations, or machine status, but the data format is inconsistent across brands or stations. If information cannot be normalized and interpreted quickly, project managers end up with dashboards that look impressive but add little control. Technology integration fails here because data visibility is mistaken for process intelligence.

A second breakdown appears in workflow timing. If operators must wait for logins, barcode validation, recipe loading, or manual approval before the next step, technology becomes a bottleneck. In fast-cycle manufacturing, even small delays can trigger line imbalance, WIP buildup, and workarounds. Those workarounds then bypass the very controls the integration was supposed to enforce.

A third failure point is accountability. When an issue occurs, who owns the response: IT, maintenance, quality, process engineering, or the vendor? Without clear governance, teams lose confidence in the integrated system. GPTWM has observed that even technically sound deployments struggle when fault response and change management are poorly defined.

How can project managers tell whether the problem is technical, organizational, or both?

Project managers should avoid framing technology integration as only a software or equipment issue. In practice, failures are often mixed. A useful diagnostic method is to separate symptoms into three layers: system performance, process design, and human adoption.

If the system suffers from unstable communication, device incompatibility, poor network resilience, or unreliable APIs, the problem is technical. If the system works but creates duplicate approvals, slow routing, unnecessary alerts, or unclear escalation paths, the problem is process design. If the tools are available but users ignore them, mistrust them, or return to manual shortcuts, the issue is adoption and operating culture.

A simple diagnostic table can help teams identify where technology integration is losing value before they expand the project further.

What are the most common mistakes in factory floor technology integration projects?

One major mistake is trying to integrate everything at once. Large transformation programs often promise full visibility across machines, tools, welding systems, quality stations, and warehouse flows. But broad scope can hide weak foundations. If one line, one product family, or one process window has not been stabilized, scaling only spreads inconsistency faster.

Another mistake is underestimating legacy equipment behavior. Older assembly tools, hydraulic units, metrology instruments, and semi-automated stations may still be productive, but they were not designed for modern connectivity. Teams often assume adapters will solve the issue. In reality, retrofit technology integration requires decisions about signal reliability, maintenance burden, calibration traceability, and cybersecurity exposure.

A third mistake is measuring success too narrowly. If the KPI is only installation completion or system uptime, the project may appear healthy while production outcomes remain unchanged. Better measures include first-pass yield, deviation response time, operator intervention rate, tool utilization, audit readiness, and unplanned downtime reduction. For engineering leaders, the value of technology integration must be visible in production control, not just infrastructure status.

There is also a cultural mistake: treating operator knowledge as secondary. On the factory floor, experienced technicians often understand hidden constraints that no dashboard captures, such as fixture wear, cable damage patterns, weld spatter effects, or environmental influences on measurement. Ignoring that knowledge leads to digital systems that look logical in meetings but fail during shifts.

How should managers prioritize technology integration when budget and downtime are limited?

When resources are constrained, the best technology integration strategy is not to digitize the most visible process, but the one where information loss causes the highest operational cost. That may be torque traceability in safety-critical assembly, weld parameter control in repair operations, measurement data capture in precision inspection, or maintenance visibility on bottleneck assets.

A practical prioritization framework starts with four questions. First, where do defects or delays become expensive fastest? Second, where is decision-making currently based on incomplete or late data? Third, which process involves repeated manual re-entry or paper-based approval? Fourth, where can integration improve both productivity and compliance at the same time?

For many plants, a phased rollout works best. Phase one should focus on one process with high repeatability and measurable impact. Phase two should connect that process to adjacent workflows such as quality verification, maintenance response, or material routing. Phase three can expand analytics and cross-line visibility. This staged approach reduces disruption and creates evidence that supports wider investment.

GPTWM’s perspective is that strong industrial intelligence comes from “stitching” process, equipment, and human signals together. That is especially true in precision tools, welding, and metrology environments, where the gap between field reality and enterprise reporting can be wide. Project managers who prioritize based on process criticality, not vendor promises, usually see stronger returns.

What does successful technology integration look like in real operations?

Successful technology integration is often less dramatic than expected. It does not always mean a fully autonomous line or a complex digital twin. More often, it means the right information appears at the right step, in a form that helps the next action happen faster and more accurately.

In a successful assembly environment, a connected torque tool automatically verifies settings, records results, flags exceptions, and routes them without extra operator burden. In a successful welding environment, process parameters, safety checkpoints, and post-join inspection records are linked so quality teams can trace causes instead of hunting for missing records. In a successful metrology workflow, measurement data is not trapped in isolated devices but feeds decisions about tool wear, rework, and process drift.

Another sign of success is low-friction adoption. Operators do not need to invent workarounds, maintenance teams know how to troubleshoot the connected environment, and supervisors trust the exceptions they see. This is where technology integration becomes operational discipline rather than a separate digital initiative.

Before launching or expanding a project, what should engineering leaders confirm first?

Before expanding any technology integration program, engineering leaders should confirm six basics. First, define the production problem in plain operational terms, such as scrap, rework, delay, or traceability risk. Second, identify the process owner who will use the output daily. Third, map the data path from source to action, not just from device to database. Fourth, test how the new process behaves under shift pressure, maintenance interruption, and mixed product conditions. Fifth, set a small group of outcome KPIs that matter to both engineering and production. Sixth, establish who owns corrective action when the system detects an issue.

These checks matter across industries because factory floor integration is never only about hardware or software. It is about whether intelligence can survive contact with production reality. That is why the strongest projects treat technology integration as a coordination system linking tools, people, standards, and timing.

If you need to confirm the right direction for a new deployment, a retrofit, or a multi-site rollout, the first discussion should cover process bottlenecks, legacy equipment constraints, data ownership, operator workflow impact, expected ROI window, and vendor interoperability. From there, it becomes much easier to judge scope, timeline, parameter requirements, and cooperation model before larger investments are committed.

Related News

Prof. Marcus Chen

Weekly Insights

Stay ahead with our curated technology reports delivered every Monday.